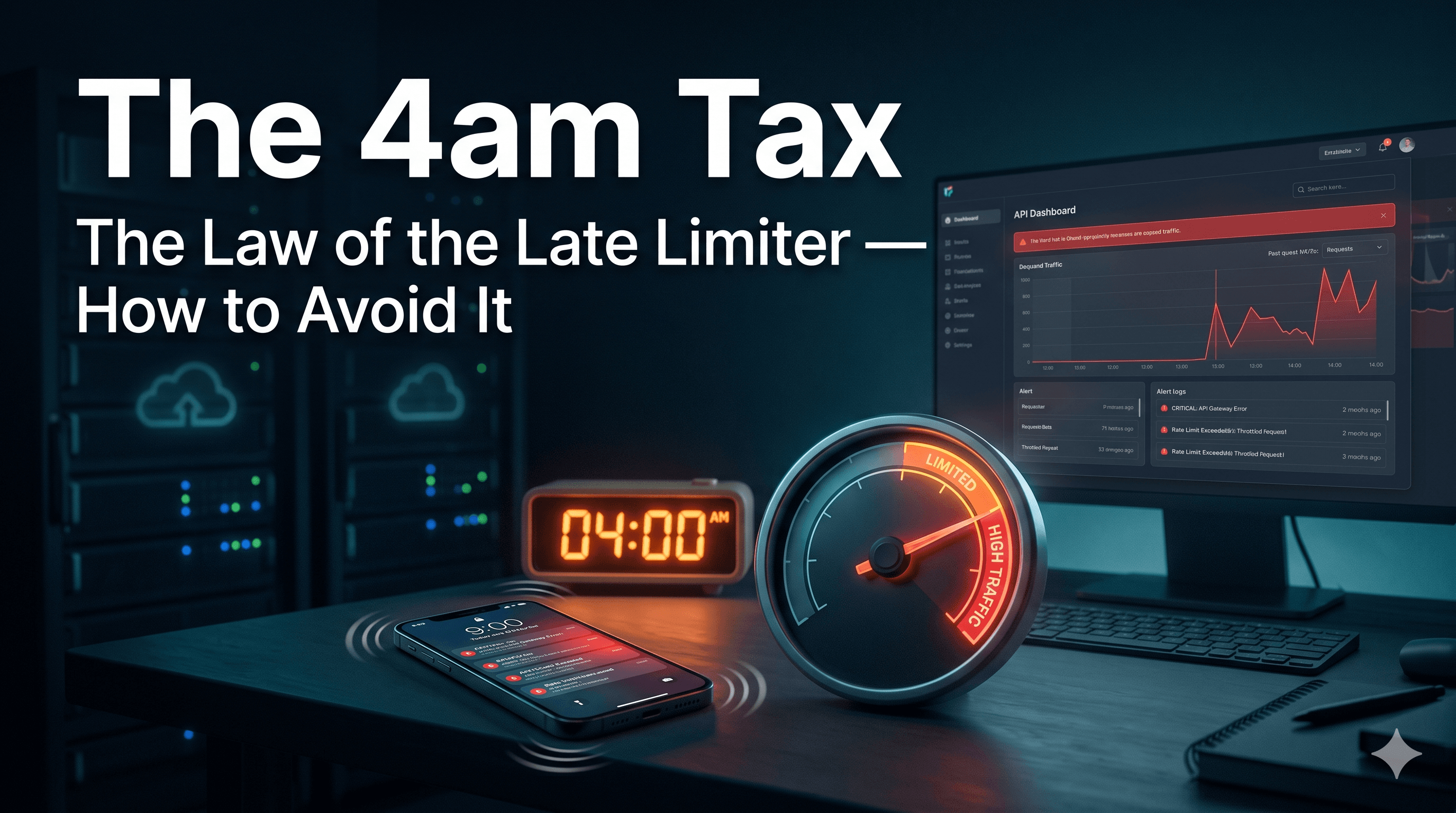

The Law of the Late Limiter: The 4am Tax of APIs (How to Avoid It)

Every API pays this tax. The only question is when.

Have you ever thought why every API eventually builds a rate limiter and how to pick the right one?

There's a tax every API pays :-

You can pay it upfront, when you have time and your servers are calm.

Or you can pay it at 4am, on a Tuesday, while your phone won't stop vibrating and your CTO is asking why the dashboard is on fire.

Most teams pay it at 4am. That's the 4am Tax. The cost of not building a rate limiter until you needed one yesterday.

Law of the Late Limiter

Every team I've watched goes through the same five days.

Day 1: "We don't need one yet."

Day 30: "Should we add one?"

Day 90: gets DDoSed.

Day 91: Rate limiter shipped in panic.

Day 180: arguing in Slack about which algorithm

This is the Law of the Late Limiter , you always build the rate-limiter the week after you needed it.

So what is it, actually?

A rate limiter is a thing that says "no" politely. It sits somewhere in front of your API and counts.

Too many requests from one IP? Slow them down.

Too many from one user? Send back a 429.

Too many to a specific endpoint? Queue or drop.

The benefits are boring. Which is the point , boring infra is the most load-bearing infra.

Stops your servers from falling over.

Stops your AWS bill from looking like a phone number.

Stops some seasoned hacker's while true loop from taking down your weekend. That's the whole job!

Where do you put it?

Three options. Roughly in order of "how badly will this end":

Client-side. The web equivalent of asking thieves to please not steal. Anyone can edit JavaScript. Don't !

Inside your app. Works but every microservice reinvents it, every restart loses state, every new team writes it differently. Death by duplication.

A dedicated layer in front. An API Gateway (Kong, Apigee, AWS API Gateway), Nginx, or a custom middleware. The limiter stands at the door, not at every desk inside the building.

The third one wins. Almost always.

You probably want two doors

This part isn't in most rate-limiter chapters. But it's how real systems work.

Door 1 : Cloudflare at the edge. It only knows your IP and how often you're knocking. It catches DDoS, scrapers, bot armies. It has no idea who you are.

Door 2 : API Gateway at your application's front. It knows you're User 47, on the Pro plan, with a quota of 5 orders per minute.

Cloudflare drops obvious garbage before it costs you a dollar.

The Gateway enforces business rules after authentication.

Layered defence. Each door knows things the other can't.

Bucket Roulette

The internet has converged on five algorithms. They all sound clever. They all leak somewhere. Every team picks the wrong bucket twice before settling on the right one. That's Bucket Roulette.

Token Bucket

A tip jar that refills steadily. One coin per second, max ten. Every request takes a coin. No coin? 429.

Allows bursts. Memory-efficient. Used by Stripe, AWS API Gateway, and most of the internet. Stripe actually runs four different limiters in production two rate limiters and two load shedders. (Stripe Engineering, 2017)

Leaking Bucket

A sink with a fixed-size drain.

Requests pour in. The drain releases them at a steady, boring rate. Sink overflows? Requests spill.

No bursts. Smooth output. Right when whatever sits behind the limiter can only handle a steady rate payment processors, anything fragile.

Fixed Window Counter

At 12:00, the counter resets. You get 100 requests until 12:01. Repeat. Works fine.

Until someone fires 100 requests at 12:00:59 and another 100 at 12:01:00.

Boundary problem. You just allowed 200 requests in 1 second.

Cheap to store. Easy to break.

Sliding Window Counter

Imagine the rule is "100 requests in the last 60 seconds, whatever 60 seconds you pick." That's a moving window. As time passes, the window slides forward. Older requests fall out the back. New ones come in the front.

Now instead of remembering every individual request, we cheat. We keep just two numbers per user:

How many requests were in the previous full minute (a single count)

How many requests are in the current minute so far (a single count)

That's it. Two numbers. No timestamps.

Why two counters work ?

When a request comes in at, say, 12:01:24, the "last 60 seconds" stretches from 12:00:24 to 12:01:24.

That window straddles two of your stored counters:

It covers the last 36 seconds of the previous minute (12:00:00–12:01:00)

It covers the first 24 seconds of the current minute (12:01:00–12:01:24)

You don't know exactly how the previous minute's 80 requests were distributed they could've all been at the start, or all at the end. So you assume they

were spread evenly.

If you assume even spread:

The 36 seconds of the previous minute we still care about = 60% of that minute = roughly 60% of those 80 requests = 48

The current minute's count is whatever we've seen so far = 30

Total estimate: 48 + 30 = 78 requests in the last 60 seconds.

That's the formula 80 × (1 - 0.4) + 30 = 78. The (1 - 0.4) is just "the part of the previous window we still need to count."

Why it's an approximation ?

Because of that assumption: "spread evenly."

If all 80 of yesterday's requests were actually in the last 5 seconds (not spread evenly), our estimate undercounts. If they were all at the start, we

overcount.

But Cloudflare ran this on 400 million real requests and found:

0.003% of decisions were wrong (≈1 in 30,000)

Average drift from the true count: 6%

So in practice, the "spread evenly" assumption is close enough

The 99-to-100 Problem

You'll have more than one limiter node. They all need the same view: "how many times has user 4127 (image) hit me in the last minute?"

Two ways this breaks.The 99-to-100 Problem. Two requests both read count = 99. Both increment to 100. Both think they're under the limit.

You just allowed 101 requests.

Fix

Atomic operations. Either Redis Lua scripts (read, check, write happens as one indivisible thing) or sorted-set operations.

Synchronization lag. Each node has its own copy. They sync eventually. Pick your battle:

Strict mode → every check hits Redis. Slower. Accurate.

Eventually-consistent → drifts a bit. Latency back.

Most production systems pick the second one and accept the leak.

The headers nobody reads

When you 429 someone, do them a favour:

HTTP/1.1 429 Too Many Requests

RateLimit-Limit: 100 # max requests per window

RateLimit-Remaining: 0 # requests left in this window

RateLimit-Reset: 47 # seconds until the window resets

Retry-After: 47. # seconds client should wait before retrying

Fail-Open Friday

Here's a question you should ask yourself :-

If your rate limiter itself dies fail open or fail closed?

Fail open = let everything through.

Fail closed = block everything.

For a public API: Fail open :- Don't take the whole product down with the limiter.

For a payments endpoint: Fail closed :- Better unavailable than charging a customer 47 times.

The right answer depends on what's worse , too much traffic, or no traffic at all.This is Fail-Open Friday.

The day your limiter dies, and the choice you made six months ago either saves your weekend or ruins it.

Questions worth asking

Before designing one in a real world situation , stop and ask yourself :-

Different tiers? Free vs Pro vs Enterprise?

Strict cap, or are bursts okay?

Static config, or do admins change limits at runtime?

What metrics matter? Allowed, throttled, latency, hit rate?

How should clients retry? Exponential backoff?

Fail open or fail closed?

IP whitelists? Internal traffic exempt?

Most people skip these and go straight to "token bucket vs sliding window." That's how you ship the wrong limiter.

The point

A rate limiter doesn't make your system faster. It makes sure your system survives long enough to be slow 😉 . Tools change. Algorithms change. Eras change.

Every API still needs someone at the door and the team that paid the tax before the 4am call is the one still sleeping when the next attack comes.

Further reading

The primary sources are worth reading directly. Most rate-limiter blog posts (including the one you're reading) ultimately distill from these: