The DevOps terms we all pretend to understand - Part 1: The Latency Family

Latency, throughput, jitter explained with water pipes you'll actually remember.

There's a moment in every developer's career when someone says 'the latency is 200ms' and you nod, smile, and quietly die inside'

Every junior dev I've onboarded in the last 15 years has nodded confidently when I said "latency."

Then quietly Googled it ( well its past tense now ) , let me rephrase it, quietly AI-ied it ( If its a valid world, but who cares 😉 ) .

Honestly, I did the same thing for years. Nodding in stand-ups. Pretending I knew the difference between latency and throughput.

The DevOps alphabet is brutal. Not because the concepts are hard but because we use 4 different words for things that feel the same, and 1 word for things that are completely different.

So here's Part 1 of a series, the terms developers nod through, look up later and somehow forget by next week. Because no body make it simple enough to digest .

Hope After reading this it gets transferred into your hard-disk not just RAM 😉

We're starting with the messiest family of all- The Latency Family.

And since I love explaining concepts using analogies we're using one of the oldest in the book. Water pipes !!

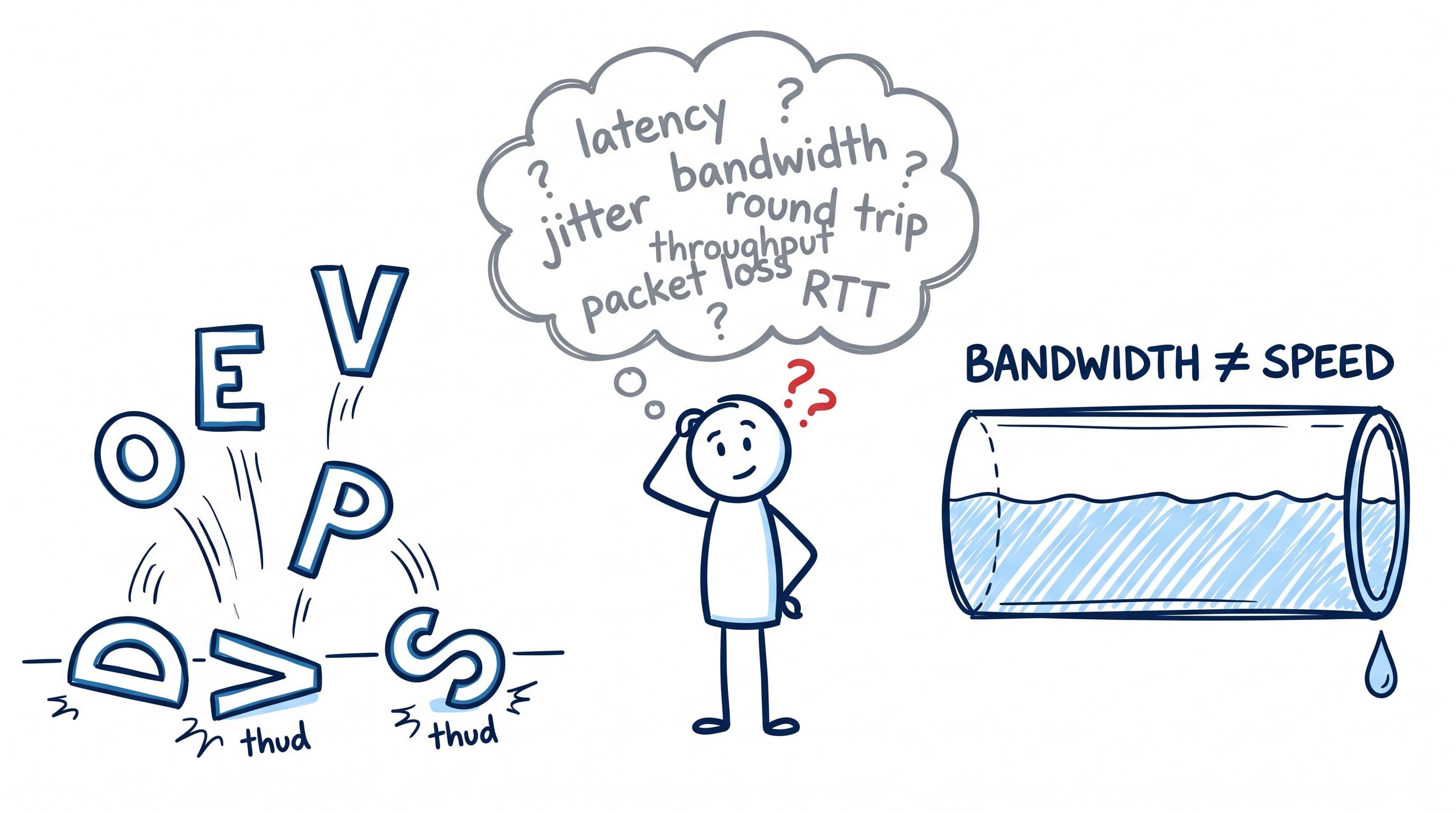

Bandwidth = the diameter of the pipe

Bandwidth is the maximum water that could flow through the pipe.

Not what's flowing right now.

Not what's reaching your tap.

Just the ceiling.

Your "1 Gbps connection" is a 1 Gbps pipe. That's the diameter. Whether any water flows through it is a completely different conversation. So next time you look at any pipe , a bandwidth bell should ring twice at-least ;) .

"Wait - water? My internet doesn't carry water." Fair catch.

Replace "water" with "bits" and the analogy holds perfectly. Pipes don't care what flows through them water, oil, electricity, or 1s and 0s. The rules of flow are the same.

Network engineers literally borrowed "bandwidth," "throttling," and "flow control" from plumbing. Not by accident. Because pipes are the cleanest way humans already think about stuff moving through a channel over time.

So when I say "water," your brain should hear "bits." Same pipe. Different cargo.

Throughput = the water actually flowing through

Throughput is what's actually moving right now.

Could be way less than bandwidth. Tap half-open. Blockage somewhere. Pump too weak. Doesn't matter. Bandwidth is the promise. Throughput is the reality.

This is why "we have 1 Gbps why is the app slow" is the wrong question.

You have a 1 Gbps pipe. You're getting 80 Mbps of water through it. The pipe isn't the problem.

Latency = time till the first drop

Turn the tap on. How long till the first drop hits the sink?

That's latency.

Not how much water comes out. Not how fast it flows after that. Just how long till something arrives.

This is the one developers fundamentally confuse with throughput.

Latency = time till water.

Throughput = how much water per second.

A 100ms latency API can have great throughput.

A 5ms latency API can have terrible throughput

They measure different things entirely.

Round Trip Time = there and back

Round Trip Time = the whole conversation

Picture two construction workers laying a long pipe between them.

Worker 1 stands at the tap end. Worker 2 stands at the far end.

Worker 1 opens the tap and shouts: "Tell me when the water reaches you!"

Worker 2 waits. Water flows through the pipe. Eventually it reaches him. He yells back: "It's here!"

The total time from Worker 1 turning the tap to hearing "It's here!" - that's Round Trip Time.

Latency was one-way. The time for water to reach Worker 2. RTT is the whole conversation. There and back.

When network engineers say "ping is 80ms" they mean RTT. The packet went there and came back in 80ms.

When a frontend dev says "the API call took 80ms" - they also mean RTT, even if they don't realise it.

Same number. Different mental model. That's where bugs live.

Jitter = same average. different experience.

Sometimes water gushes. Sometimes it dribbles. Sometimes it pauses entirely.

Average flow is fine. But you can't shower like this , well unless there's a water crisis going on. Then dribble-mode suddenly feels like luxury 😉

That's jitter - Inconsistent latency.

Your monitoring dashboard says "average response time: 120ms ✅"

Meanwhile your users are getting 50ms, then 400ms, then 60ms, then 800ms.

Average is fine. Experience is broken.

This is why p50, p95, p99 exist. Average is a lie when jitter is high. We'll cover percentiles in Part 3 but remember the principle:

Average is the most dangerous metric in your dashboard.

Packet Loss = the pipe leaks

Cracks in the pipe. Water sprays out the sides. Less water reaches the end. Doesn't matter how fat the pipe is. Doesn't matter how much pressure you have. Leaks are leaks.

In networking, packet loss means some of your data simply never arrives. TCP retries it. UDP doesn't even tell you. Either way, you're paying a cost.

This is where engineers chase the wrong fix for weeks.

"Why is the API slow?" scales up servers

"Why is the API slow?" adds caching "Why is the API slow?"

Finally checks the network "Oh. We have 3% packet loss between two regions."

As I always say Production has a PhD in drama 🙏 .

Congestion = the 7 AM shower rush

Same pipe. Same diameter. Same pressure rating.

But now everyone in the building turns on their shower at 7 AM. Why ?

May be all have same office timing. Pressure drops for everyone. That's congestion. Demand exploded.

Pipe didn't shrink. Capacity is fixed, but suddenly everyone wants a piece.

This is your service at 9 AM Monday when every laptop in the company tries to log in at once. The Wi-Fi router didn't change. The auth server didn't shrink. The 7 AM rush just moved into the office!

The one diagram I want you to remember

A fat pipe with low pressure delivers less water than a thin pipe with high pressure.

Read that twice. That's why upgrading from 100 Mbps to 1 Gbps sometimes makes zero difference to your users.

You upgraded the pipe. You didn't fix the pressure. You didn't fix the leaks. You didn't fix the 7 AM rush. You just bought a bigger pipe and got the same water.

The actual lesson

Engineers love to optimise the metric they can see. Bandwidth is visible. It's on the invoice. It's a number you can quote in meetings.

Latency, jitter, packet loss, congestion these are invisible until something breaks.

Most "performance problems" aren't bandwidth problems :-

They're pressure problems.

Or leak problems.

Or 7 AM problems.

Remember the network isn't slow. Your mental model is !!

What's next

This was Part 1 of The DevOps terms we all pretend to understand

Coming up:

Part 2: SLA vs SLO vs SLI. The three siblings everyone confuses, and the one your manager quotes wrong in every incident review.

Part 3: p50, p95, p99 - and why "average response time" is a beautiful lie.

Part 4: Blue-green vs canary vs rolling vs shadow. The deployment menu nobody explains.

Part 5: Logs, metrics, traces - three tools, three jobs, one giant confusion.

If this helped, the best compliment is sharing it with the junior on your team who's been searching these silently for months on the internet.

We've all been that person. Some of us still are 😅